I know some may find that title “attention” grabbing, but it is really a statement that is true for any item (well aside food). Pre-ordering is a dangerous gamble at times and with hardware like software my recommendation has and always will be the same, wait for independent reviews. Of course, with new hardware or software that hype level kicks in and new, shiny and amazing always helps captivate the audience and loosen the wallets, games and us tech enthused people are some of the most susceptible to this.

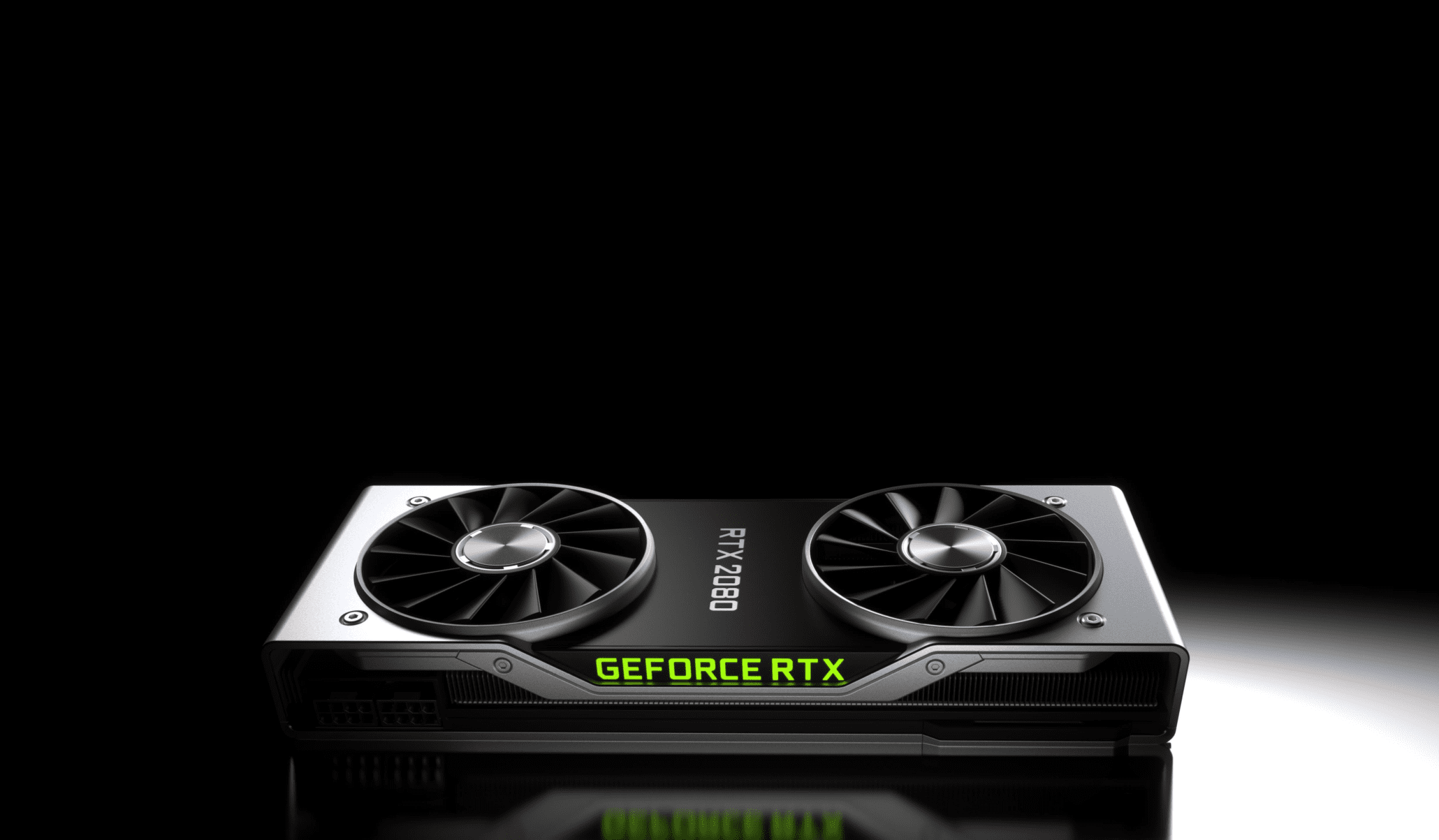

And so it was, that Nvidia stepped forth at Gamescom to announce its not very secret new RTX cards, the new name signifying this shift into Ray Tracing. I have covered what this means already in my video and written article. This article will concentrate on what the new cards offer via these new methods and the much, much wider base improvements over the competition including the outgoing 1080 GTX range.

With the demo all concentrating on the shiny new Ray tracing core that sits within the chip it highlighted the age old problem that Sony also faced and largely failed when the Pro was launched. Selling new tech can sometimes be akin to the emperor’s new clothes, the internet based vessel also becomes another factor. Sony tried to sell 4K images on 1080P screens, it had to spend much time shaping that message over time, helped in no small part by channels like mine that better demonstrated the real-world benefits it and the later X from MS actually delivered. Nvidia have started out with very much the same problem, maybe worse due to the significantly higher cost of entry. Much of this is of their own making, standing on your own shoulders each year boasting improved resolution and frame-rates over your last generation has conditioned its audience to always expect this above all else.

But not today, 100% of the message was describing better shadows and reflections, this is how the mainstream will see it and not my view. Worse than this, the cost is a perceived step back to 1080P and sub 60fps performance, not really a $1200 prize to say the least. Of course the detail behind this is far more complicated, impressive and IS a step forward for real-time games, much like console generations and that desire for 60Hz titles being tempered by the need to upstage the competition with better visuals, they come with a cost, the biggest Nvidia have ever felt. To highlight this clearly both Nvidia and AMD have been making cards that can largely deliver 1440P and upwards resolutions at 60 or more frame-rates, if you purchased a new Graphics card in the past 3-4 years chances are you have run games at this rate or higher over that time frame. That pill does seem rather tough to swallow, compounding this is the fact ALL other cards that can still deliver this and 4k/60 on some games are half or less of that price. They have become the dominant GPU manufacturer in this regard, something which has clearly gone to their heads with insane and completely anti-consumer benefit decisions, one of which is the huge price increase, almost twice the price of the outgoing 1080Ti is a significant hike in a little over a year.

There is another!

Contrary to what you may think or Nvidia will tell you they are certainly far from the first to hit real-time Ray Tracing solutions in games. Some titles have been using it with comparable hybrid functions as the RTX cards will, recently released Claybook on even the XboxOne uses Ray traced coned and Signed Distance Fields to generate all of its world, physics and destruction. AMD have their open source Radeon Rays built on the Vulkan API, so it is possible and hinted that we WILL see this appearing on AMD GPU’s using compute this year also, and likely we have already seen some of those titles at the event. Even at a hardware level we have seen long term solutions that mix hardware and software into a genuine solution from the likes of Samsung and pioneering hardware company imagination, known best for powering Sega’s last console with its PowerVR architecture in the Dreamcast. It has offered Ray traced solutions in mobile form with its PowerVR Wizard GPU’s, again working within Vulcan. This was demonstrated at GDC2017 and although far from a real-world product at this point, we can be pretty sure it would not be asking these prices.

What about those frame-rates?

The cries from the internet were heard and Nvidia responded, in typical Nvidia style with a vague and misleading graph that is open to huge amounts of interpretation, so that is what I am going to do. You see one of the main factors that has come to light is Nvidia are now asking for a very restrictive NDA to be signed before any review hardware can be provided as such my only chance of testing these new cards is by buying one myself when released OR managing to snag a review one from IHV such as Gigabyte, MSI et al. This is one of if not the biggest reasons I have a Patreon as I buy all my own equipment games and hardware unless stated as such I am limited on what I can test and why I need all the support to raise my subscriptions, views and thus ability to get access to a great breadth of review devices and code. We shall see what I can sort out between now and launch. We do have some info to help us draw some predictions though and the cagey results from Nvidia help that greatly.

The graph above shows “relative” 4K/60 performance compared to the outgoing 1080 and the new 2080 card (a cheaper £800 card at least, still 74% more expensive mind). If the graph is to scale, then we have between 33-60% performance improvement on these games with an average of about 35% this is without doubt RTX features turned off. Taking that at face value, a game running at 46fps would hit 60, it is not known however if this is low, high or average and some games can be lower, some higher and are likely the best case scenario. For $150-£200 less than that price you can buy a 1080Ti, a card that would likely be within 10% of these tests, possibly better it in some. Nvidia are aware and have pushed to provide more from the 2 year gap. The biggest sits within the RTX functions of course I have covered already, another is that touted DLSS. With this turned on we are seeing over twice the improvement from the 1080 which is substantial and makes this far more appealing for those after a 4K/60 experience, wait though, we have a catch.

DLSS does not and will not work on all games only those it has been designed for at present, this is not a Driver function alone but instead an engine one, with current information pointing to the fact the game needs to be rendered on the Nvidia learning servers to calculate how a frame is generated and then best approximate that information and fill in the blanks using a vastly higher native resolution. This file is then added to the Game Driver and you update with your game ready driver which uses this learned path to inject the missing data from a lower resolution into a higher final one. If that sounds a little like Checkerboard or temporal injection that’s because it is. The actual technique behind it and how good it is remains to be seen and shown, but essentially this is a newer, likely improved version of the Playstation Pro methods to achieve a perceived higher resolution. The native resolution (as you would expect it) from these examples will be lower than 3840×2160 and this Deep Learning super sampling is taking the gaps in the data or image and injecting them with interpolated, assumed or otherwise derived information from a spatial and temporal element.

As we saw with Pro titles this is indeed an excellent way to increase IQ while rendering less pixels than would normally be possible, allowing games to run at a full 3840x2160cb which would normally only be possible at 2560×1440. The improved sharpness of image is softer than a standard 4K display as you lose texture details slightly but is much better than a base 1440 image with a similar performance profile. Nvidia would likely get full access to your engine code to run through this process, allowing these titles to run slightly better than current 1440P games with an IQ somewhere between it and native 4k, something I applaud and have done for the past few years. Again though, Nvidia have been advocates for native resolutions and conditioned its audience to be the same which has and will likely be the sword they also have to sit on. I am sure this will slowly dissipate as people either convince themselves it is better here or see the results and agree with something many of us and teams have been saying for too long, using all this power, bandwidth just to render more pixels is a waste. I am very glad that Nvidia have taken this step as much if not more so than the RT strides as it means it will not become stigmatized within the PC space and when AMD or Intel follow suit it will be accepted, a win, win for all.

RTX vs GTX cost & performance comparison

Beware of the bar charts

It is very clear that Nvidia have played this graph well, using the 1080 a card clearly bandwidth bound over the 1080Ti is blatantly obvious. Something the new 2080 addresses with a 448GB/s bandwidth being very close to the Ti model’s 484GB/s. We need to concentrate on this as the specs highlight that the 2080 COULD be slower in Bandwidth bound scenarios than the cheaper 1080Ti. In addition, showing HDR games, a demo and other titles that likely favour this example is specific mix. With HDR being more of an impact on Nvidia cards than AMD, something I am sure has been resolved or improved with the new Turing cards. If you are launching a flagship model why would you not put that front and centre? As was done with all the Ray Traced games and demos, the 2070 is not even available at launch, another questionable sign. The drive for the improved founders card, appeal to overclocking AND now improved boost clocks over the vendor cards ( which will likely not be the case as OC is a lottery at times) was another. No doubt the faster and wider 2080Ti should be around 27% faster in bandwidth scenarios over the 1080Ti but this will not always be the case, any pipeline and shader improvements will also be an unknown factor. Adding to the paper specs the range topping Ti will be an almost equal 29% more powerful than the last, you can see all the details in the box above including one of the most important, bang for buck or cost per Terraflop. The flow of cards from the current new prices on Amazon.com show a startling trend of the steep rise from each card and over 2x the cost on the 2080Ti is a clear example of this, the 1070 is still by far the best performance to cost ratio.

Summary

No matter if you think I am wrong, blindly follow Nvidia and have pre-ordered this card (I wish you hadn’t though as that makes it harder for the rest of us to try and keep our pants on) you must see the info is not as clear as it should be. They are not lying of course but as the saying goes there are lies, lies, damn lies and statistics and this chart has stats all over its face. My advice remains, wait for reviews and see if my like for like improvement predictions comes true. If you are looking forward to some shiny Ray traced extras then the price of entry is high but known if, on the other hand, a significant leap over Pascal performance is your aim you may not be quite as satisfied with the results next week.

The biggest ? left is if all the DLSS will be locked exclusively to the newer cards which I hope not, the RTX functions of course will be and this will naturally raise your CPU requirements on top in conjunction with Memory which may in fact give the 2080 a further advantage with its 3GB increase over the 2070 which is releasing later. It would be a great shame if Pascal at least is not treated to a form of DLSS or reconstruction methods to aid those cards performance and appeal, although knowing Nvidia’s previous tactics and marketing ploys I think we already know the answer to that. I hope I can secure a 2080 or better yet a Ti to put it through its paces and you can help by supporting me on Patreon to buy one so I can deliver my standard in-depth, unbiased review on if this is the future of PC gaming and not all shadows and mirrors.