Video game graphics have evolved over the years from the early inception of pixels and vector graphics in the 70’s to a point where they are no longer recognizable. One thing has remained and was the single-handed winning solution, rasterization. The basis for pretty much all methods of real-time computer graphics and formed a large portion of off-line renders, Pixar’s Renderman is itself was a raster-based application. Once we moved to 3D rendering now having a 3rd axis for depth or height added much more complexity to this process, but rasterization was retained with this step forward. Improvements to it were obviously made including splitting up this image into chunks or tiles so that each pixel can be calculated and shaded in parallel across the GPU cores. Roughly this is how a standard frame is drawn using this method.

- The scene is calculated using vectors and vertex points to allocate the objects within 3D space including depth, the drawing of the triangles.

- From here the resulting image is transformed into a single plane from the chosen camera point. Transforming these points into an equal corrective position using a relatively simply linear algebra calculation.

- This image from the viewpoint is then calculated in sections to reduce bandwidth and maximise performance. Each triangle is validated from the Frustum view to determine which pixels are affected by it, what colour it is, if it is visible or not etc and then the required colour it set.

- From here additional work is also run including another view point from light sources to determine shadows and lighting and any post processed effects such as motion blur, depth of field, bloom, alpha effects, Ambient occlusion and on.

The core method is far more complicated than this of course, but as a high-level overview this is how it works and it has worked well. What this solution is not so good at is pretty much everything that makes life look like, well life, namely light and shadow. You see, the method described is like most rendering methods an approximation or a hack. Many effects and techniques currently used exist due to the base limitations this method revolves around e.g. light reactions on objects, materials and reflections. Shadows and ambient lighting including global illumination which is the bouncing of photons and subsequent occlusion, distortion or absorption of them within surface properties and reflectance. These are all problems that GPU programmers have and always will be solving with more convincing but efficient solutions. What if this problem could be simply removed though? Well it can, sort of and contrary to the Nvidia presentation it does NOT just work, nothing is ever that simple, but it does solve much of this better.

Ray Tracing for the win

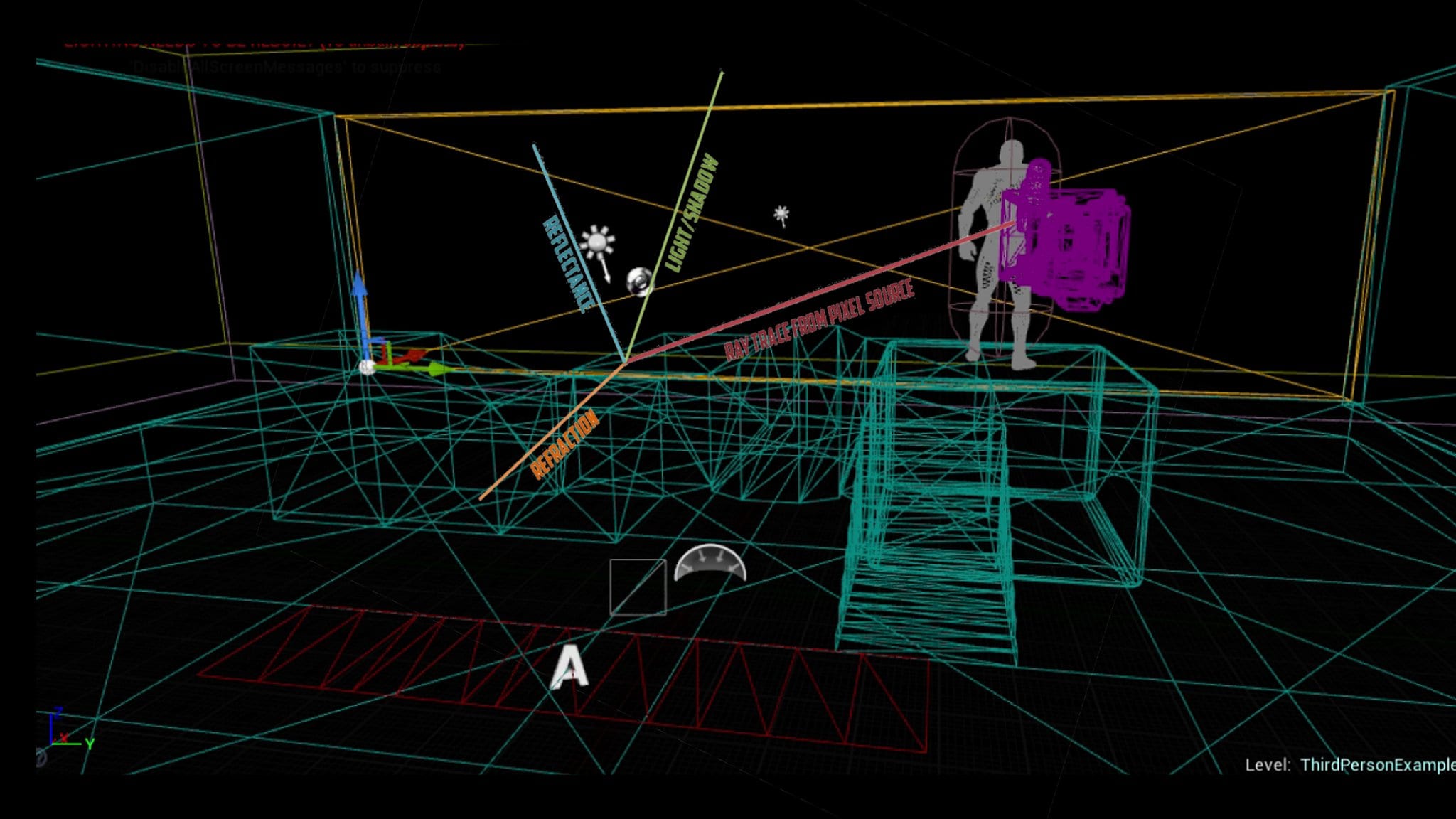

Rasterisation was not the only method known of when video games started out, Ray Tracing existed at the time and this carried a great deal of benefits over the other. One single factor though ruled this out from pretty much all methods of animated rendering at the time, computational cost. Rasterisation is fast but needs much work (see above) to deliver convincing images. Ray tracing is almost exactly the other way around. This is still true today and the reasons for this are likely more than you may think. Unlike raster methods, ray tracing is perfectly suited to handle 3D objects and removes the limitation of flipping and flattening this multi-dimensional world into a single, isolated viewport. Rather than working out what the viewer can see just within this finite scope, rays are cast per pixel from this viewport into the scene using a recursive algorithm. This means that the ray itself will not simply stop when it hits an intersection or object but continue for a pre-calculated distance or until the equation is resolved to a sufficient level, again decided by the programmer or artist.

This ray is where the name comes from and it allows a scene to be drawn with as much or as little detail as needed. Each pixel that is traced will go into the scene, determine the first object it hits and then request the material properties, albedo, roughness etc and then further rays will be sent out from this point, namely 3.

- Reflectance – A diffuse surface will reflect light in all directions, therefor will have a low reflectance level. A high smooth specular surface such as glass or metal will have a Fresnel calculation which will focus the reflected surface back at the player’s eye accurate to the surface normal and any microfacets. In addition to this the radiosity of this light or fluorescence from the material will be a factor in the light that is not consumed, this forms the key function of Global Illumination.

- Refraction – This is where the incoming light is adjusted, deformed or redirected back, across or through the material. Think of caustic reflections when you shine light through a glass or under water, this is the focus of light rays into a higher concentration. It is also why you have distortions on glass objects or water.

- Shadow/Occlusion – Once a surface is detected a new ray is bounced off towards the nearest (or included) light sources to determine if this is in light or shade. If occluded by another object the pixel would be shaded accordingly.

Again, this is a high-level overview and does simplify the process, but you can already see the benefits this solution offers. Rather than having to draw a view from each light source in the scene separately just to determine what surfaces are in light or shadow, draw the shadow map and then discard the rest. We now get this calculated per-pixel accurately from within our ray tracing path. In addition, depending on how many bounces we allow of this ray we can have multi bounce GI, calculate multiple light sources such as area or directional and refract the light travel behind as part of this one sample route. The other big win here is in reflectance as this is a huge limitation of the current method, if you cannot see the object or surface in the scene then you simply cannot reflect it without some other methods. These come down to 3 main areas that are regularly used in modern game engines and have been used in much older ones.

Cubemaps – These are precomputed images taken from a wide angle at fixed points within a scene. Then used to offer reflections for building or cars in racing titles of the surrounding scenery. This allows off screen reflections to work but not (most likely) include dynamic objects.

Screen space reflections – These were invented to solve the big issue of cubemaps, namely dynamic objects. They are formed from a depth sample using the Z-buffer to re-draw the reflected source opposite to the view i.e. the view and the reflected light lay on either side of the surface normal.

Planar reflections – Much like the shadow drawing method mentioned earlier, this involves redrawing or rendering the scene again from another angle allowing off-screen objects to be reflected in a mirror say. This is a computational and memory expensive method as it really requires everything to be done twice, think of a rear-view mirror in racing titles as a base example of this.

So, why do we not just ray trace these now? Well the answer is simple hardware and real-time requirements make this all but impossible as we mentioned above rasterization fast above all other things, Ray Tracing is slow above all other things and on a current or even impending GPU this is still not a viable option for games at all.

Mix and match

What is possible is a hybrid of these and that is what we have recently seen from Nvida and its new RTX 2070 and 2080 cards. I will cover these in more depth later but for now they have allocated and designed a core hardware function of these (the named RT cores) dedicated to handling ray traced calculations within scenes. Based on the evidence shown, of which I do need to read much more on so bear with me, they are likely mixing up Ray bundles ( a combined set of rays that reduce cost at the expense of accuracy I would imagine Shadow of the Tomb raider is using this for its shadow option) and then temporal reconstruction and AI to fill in the gaps. The simplest way to describe this is to use Quake 2 running with a full path traced engine. You see as the image moves it breaks down into points, like it is constructed from a dot of the artist’s pen. As it moves the gaps are noticed and the objects breaks down, stand still and it looks better or if the section is bright or dark. Using stochastic algorithms, effectively random sample points, you get a higher accuracy and over time you can use this jittered method to inject or guess what the missing data would be, this is really how current Temporal reconstruction AA works or even the much vaunted Checkerboard rendering. The results is almost a point cloud creation of the world, instead they are the individual rays hitting the surface from the camera and drawn.

What we have in these demos is the majority of the scene is still calculated as per rasterization methods but certain functions such as shadows or reflections i.e. light is being generated from ray traces. The Battlefield V section was a perfect example of the benefits this solution offers, within reason. Aside the clear and obvious Shiny car scenario (this was demo after all) it highlighted just what real reflections can offer on a visual and immersive level. Seeing the offscreen explosion in the car could only be done using this method or a combination of the others mentioned which would likely be not as convincing and possibly more expensive. It expands the possibility for new game-play and design choices beyond what is currently possible within the constraints. The recent shift to physically approximate shading will help here as the current method will work just as well if not better with these new RT solutions. Taking the BRDF’s and any microfacet distortions from the normal means that light will appear more realistic if properly implemented across the variety of material properties, this solution does not give you any of this by itself PBR is still required.

Again the reflections in Control use this well offering a much better level of specularity within the scene without the obvious draw backs from screen space solutions. Shadows are another benefit with them being able to calculate accurate shadows from multiple sources and adjust them real-time within the scene for static and dynamic objects alike. They are still filtered though as a ray traced shadow would be almost like a stencil shadow, with the under sampling issues, insufficient rays, the noise at the edges can be blurred here to create soft shadows. These all fall well within the expectations I had for ways this solution would work, but the same as before still apply. To replace rasterization with Ray tracing or better Path tracing you need a much higher level of computation, bandwidth and memory not to mention the dreaded scourge of latency. I will cover in another article but current GPU’s are all designed around solving rasterization problems from wave fronts to cache’s, large pools of memory and EQAA they have become very good at doing this job, Ray Tracing does not share much of this as a method and introduces its own issues not highlighted in the mainstream.

Don’t go into the light

Anti-Aliasing: Having a ray cast into the scene itself lacks the weight that current methods do. If my ray from pixel x hits a triangle how much if it was in the triangle, all of it? 75% of it? If this is not known then we have a much bigger issue of aliasing, I.e. sub-pixel movement as pixels appear and disappear from existence as we move. The quick answer to this is we send out more rays per pixel and increase the sampling just like super sampling. But for a temporally stable image there is no fixed answer as to how much this should be. Sure for bright or dark areas the level of sample can be much lower. But for a standard scene with a mixture of colour, movement and luminosity it is likely into the 10’s to 100’s of thousands per pixel. Therefore we saw the heavy reliance on the Neural network construction (DLSS) to improve the image or gaps here but injecting the under sampled parts using various other sources, again see TAA.

Memory and Geometry increase: A big difference between Ray tracing and Raster is that you must keep much more of your world geometry in memory and active. Many of the performance gains in raster methods come from culling triangles that are not in view, obscured or off-camera. This improves performance, reduces pressure on the GPU and helps add more to the visible scene. Now this is no longer as simple, before a building just dropped out of the left of the camera would be culled. With RT we need to leave this in memory and sample it from the rays to draw the reflection of it in a nearby window. The same for alpha affects and character models as the rendering load increases the amount of time left to do other work is reduced and more memory & bandwidth is required to fulfil this.

Recursive/Secondary rays: The single biggest benefit of this is the secondary, 3rd etc rays that are calculated into the scene and x amount per pixel. This is where the benefit comes from in terms of image accuracy and solution to other problems. The issue here is they are not coherent and as such you need to find a method to balance out the random nature and reduce your work load, bounding boxes etc and is pretty much what rasterization resolved with BSP (Binary Spaced Partitions) I covered this in my Wolfenstien and Doom videos.

Is this the future?

With all that said the question asked at the very start of this article is still to be answered and that answer is, it depends. In the medium term i.e. next 5-8 years or so I would say that no it is still not the most pragmatic and efficient method of rendering for real-time games. The basis of this is that even Off-line renders for films still use a hybrid of Ray, Path tracing and rasterization to maximise the cost vs quality balance and they have hours per frame to render these, not until Monsters University did we see all light sources being generated this way. I think we will see a small and slow adoption of engines incorporating Ray Traced functions within the engine but not replacing them as the large portion of hardware both on consoles and PC will not be able to support this which makes them by nature a limited solution for wider adoption. Just what next gen consoles from Sony, and AMD have planned is yet to be shown but at best a similar method of an intelligent algorithm, hardware based functions within a hybrid approach would be by best guess.

The day for a complete modern ray traced game in real-time is still some way off from reality, but the latest step forward is the closet we have come to a solution that may just work in various causes to achieve something remarkable. That date may not be visible just yet, but the future is bright enough that it could slip into view very soon.